Project Execution Case Study

In today’s world of project management, perhaps the single most important skill that a project manager can possess is risk management. This includes identifying the risks, assessing the risks either quantitatively or qualitatively, choosing the appropriate method for handling the risks, and then monitoring and documenting the risks.

Effective risk management requires that the project manager be proactive and demonstrate a willingness to develop contingency plans, actively monitor the project, and be willing to respond quickly when a serious risk event occurs. Time and money is required for effective risk management to take place.

The Space Shuttle

Challenger Disaster

On January 28, 1986, the space shuttle Challenger lifted off the launch pad at 11:38 A.M., beginning the flight of mission 51-L.1 Approximately seventy-four seconds into the flight, the Challenger was engulfed in an explosive burn and all communication and telemetry ceased. Seven brave crewmembers lost their lives. On board the Challenger were Francis R. (Dick) Scobee (commander), Michael John Smith (pilot), Ellison S. Onizuka (mission specialist one), Judith Arlene Resnik (mission specialist two), Ronald Erwin McNair (mission specialist three), S. Christa McAuliffe (payload specialist one), and Gregory Bruce Jarvis (payload specialist two). A faulty seal, or O-ring, on one of the two solid rocket boosters caused the accident.

Following the accident, significant energy was expended trying to ascertain whether the accident had been predictable. Controversy arose from the desire to assign, or to avoid, blame. Some publications called it a management failure, specifically in risk management, while others called it a technical failure.

Whenever accidents had occurred in the past at the National Aeronautics and Space Administration (NASA), an internal investigation team had been formed.

1The first digit indicates the fiscal year of the launch (i.e., “5” means 1985). The second number indicates the launch site (i.e., “1” is the Kennedy Space Center in Florida, “2” is Vandenberg Air Force Base in California). The letter represents the mission number (i.e., “C” would be the third mission scheduled). This designation system was implemented after Space Shuttle flights one through nine, which were designated STS-X. STS is the Space Transportation System and X would indicate the flight number.

But in this case, perhaps because of the visibility, the White House took the initiative in appointing an independent commission. There did exist significant justification for the commission. NASA was in a state of disarray, especially in the management ranks. The agency had been without a permanent administrator for almost four months. The turnover rate at the upper echelons of management was significantly high, and there seemed to be a lack of direction from the top down.

Another reason for appointing a Presidential Commission was the visibility of this mission. This mission had been known as the Teacher in Space mission, and Christa McAuliffe, a Concord, New Hampshire, schoolteacher, had been selected from a list of over 10,000 applicants. The nation knew the names of all of the crewmembers on board Challenger. The mission had been highly publicized for months, stating that Christa McAuliffe would be teaching students from aboard the Challenger on day four of the mission.

The Presidential Commission consisted of the following members:

- William P. Rogers, chairman: Former secretary of state under

President Nixon and attorney general under President Eisenhower.

- Neil A. Armstrong, vice chairman: Former astronaut and spacecraft commander for Apollo 11.

- David C. Acheson: Former senior vice president and general counsel, Communications Satellite Corporation (1967–1974), and a partner in the law firm of Drinker Biddle & Reath.

- Eugene E. Covert: Professor and head, Department of Aeronautics and Astronautics at Massachusetts Institute of Technology.

- Richard P. Feynman: Physicist and professor of theoretical physics at California Institute of Technology; Nobel Prize winner in Physics, 1965.

- Robert B. Hotz: Editor-in-chief of Aviation Week & Space Technology magazine (1953–1980).

- Major General Donald J. Kutyna, USAF: Director of Space Systems and Command, Control, Communications.

- Sally K. Ride: Astronaut and mission specialist on STS-7, launched on June 18, 1983, making her the first American woman in space. She also flew on mission 41-G, launched October 5, 1984. She holds a Doctorate in Physics from Stanford University (1978) and was still an active astronaut.

- Robert W. Rummel: Vice president of Trans World Airlines and president of Robert W. Rummel Associates, Inc., of Mesa, Arizona.

- Joseph F. Sutter: Executive vice president of the Boeing Commercial Airplane Company.

- Arthur B. C. Walker, Jr.: Astronomer and professor of Applied Physics; formerly associate dean of the Graduate Division at Stanford

Background to the Space Transportation System

University, and consultant to Aerospace Corporation, Rand Corporation, and the National Science Foundation.

- Albert D. Wheelon: Executive vice president, Hughes Aircraft Company.

- Brigadier General Charles Yeager, USAF (retired): Former experimental test pilot. He was the first person to break the sound barrier and the first to fly at a speed of more than 1,600 miles an hour.

- Alton G. Keel, Jr., Executive Director: Detailed to the Commission from his position in the Executive Office of the President, Office of Management and Budget, as associate director for National Security and International Affairs; formerly assistant secretary of the Air Force for Research, Development and Logistics, and Senate Staff.

The Commission interviewed more than 160 individuals, and more than thirty-five formal panel investigative sessions were held generating almost 12,000 pages of transcript. Almost 6,300 documents totaling more than 122,000 pages, along with hundreds of photographs, were examined and made a part of the Commission’s permanent database and archives. These sessions and all the data gathered added to the 2,800 pages of hearing transcript generated by the Commission in both closed and open sessions. Unless otherwise stated, all of the quotations and memos in this case study come from the direct testimony cited in the Report by the Presidential Commission (RPC).

BACKGROUND TO THE SPACE TRANSPORTATION SYSTEM

During the early 1960s, NASA’s strategic plans for post-Apollo manned space exploration rested upon a three-legged stool. The first leg was a reusable space transportation system, the space shuttle, which could transport people and equipment to low earth orbits and then return to earth in preparation for the next mission. The second leg was a manned space station that would be resupplied by the space shuttle and serve as a launch platform for space research and planetary exploration. The third leg would be planetary exploration to Mars. But by the late 1960s, the United States was involved in the Vietnam War, which was becoming costly. In addition, confidence in the government was eroding because of civil unrest and assassinations. With limited funding due to budgetary cuts, and with the lunar landing missions coming to an end, prioritization of projects was necessary. With a Democratic Congress continuously attacking the cost of space exploration, and minimal support from President Nixon, the space program was left standing on one leg only, the space shuttle.

President Nixon made it clear that funding all the programs NASA envisioned would be impossible, and that funding for even one program on the order of the Apollo Program was likewise not possible. President Nixon seemed to favor the space station concept, but this required the development of a reusable space shuttle. Thus NASA’s Space Shuttle Program became the near-term priority.

One of the reasons for the high priority given to the Space Shuttle Program was a 1972 study completed by Dr. Oskar Morgenstern and Dr. Klaus Heiss of the Princeton-based Mathematica organization. The study showed that the space shuttle would be able to orbit payloads for as little as $100 per pound based on sixty launches per year with payloads of 65,000 pounds. This provided tremendous promise for military applications such as reconnaissance and weather satellites, as well as for scientific research.

Unfortunately, the pricing data were somewhat tainted. Much of the cost data were provided by companies who hoped to become NASA contractors and who therefore provided unrealistically low cost estimates in hopes of winning future bids. The actual cost per pound would prove to be more than twenty times the original estimate. Furthermore, the main engines never achieved the 109 percent of thrust that NASA desired, thus limiting the payloads to 47,000 pounds instead of the predicted 65,000 pounds. In addition, the European Space Agency began successfully developing the capability to place satellites into orbit and began competing with NASA for the commercial satellite business.

NASA SUCCUMBS TO POLITICS AND PRESSURE

To retain shuttle funding, NASA was forced to make a series of major concessions. First, facing a highly constrained budget, NASA sacrificed the research and development necessary to produce a truly reusable shuttle, and instead accepted a design that was only partially reusable, eliminating one of the features that had made the shuttle attractive in the first place. Solid rocket boosters (SRBs) were used instead of safer liquid-fueled boosters because they required a much smaller research and development effort. Numerous other design changes were made to reduce the level of research and development required.

Second, to increase its political clout and to guarantee a steady customer base, NASA enlisted the support of the United States Air Force. The Air Force could provide the considerable political clout of the Department of Defense and it used many satellites, which required launching. However, Air Force support did not come without a price. The shuttle payload bay was required to meet Air Force size and shape requirements, which placed key constraints on the ultimate design. Even more important was the Air Force requirement that the shuttle be able to launch from Vandenburg Air Force Base in California. This constraint required a larger cross range than the Florida site, which, in turn, decreased the total allowable vehicle weight. The weight reduction required the elimination of the design’s air breathing engines, resulting in a single-pass unpowered landing. This greatly limited the safety and landing versatility of the vehicle.

As the year 1986 began, there was extreme pressure on NASA to “Fly out the Manifest.” From its inception, the Space Shuttle Program had been plagued by exaggerated expectations, funding inconsistencies, and political pressure. The ultimate vehicle and mission design were shaped almost as much by politics as by physics. President Kennedy’s declaration that the United States would land a man on the moon before the end of the decade (the 1960s) had provided NASA’s Apollo Program with high visibility, a clear direction, and powerful political backing. The Space Shuttle Program was not as fortunate; it had neither a clear direction nor consistent political backing.

Cost containment became a critical issue for NASA. In order to minimize cost, NASA designed a space shuttle system that utilized both liquid and solid propellants. Liquid propellant engines are more easily controllable than solid propellant engines. Flow of liquid propellant from the storage tanks to the engine can be throttled and even shut down in case of an emergency. Unfortunately, an allliquid-fuel design was prohibitive because a liquid fuel system is significantly more expensive to maintain than a solid fuel system.

Solid fuel systems are less costly to maintain. However, once a solid propellant system is ignited, it cannot be easily throttled or shut down. Solid propellant rocket motors burn until all of the propellant is consumed. This could have a significant impact on safety, especially during launch, at which time the solid rocket boosters are ignited and have maximum propellant loads. Also, solid rocket boosters can be designed for reusability, whereas liquid engines are generally used only once.

The final design that NASA selected was a compromise of both solid and liquid fuel engines. The space shuttle would be a three-element system composed of the orbiter vehicle, an expendable external liquid fuel tank carrying liquid fuel for the orbiter’s engines, and two recoverable solid rocket boosters. The orbiter’s engines were liquid fuel because of the necessity for throttle capability. The two solid rocket boosters would provide the added thrust necessary to launch the space shuttle into its orbiting altitude.

In 1972, NASA selected Rockwell as the prime contractor for building the orbiter. Many industry leaders believed that other competitors who had actively participated in the Apollo Program had a competitive advantage. Rockwell, however, was awarded the contract. Rockwell’s proposal did not include an escape system. NASA officials decided against the launch escape system since it would have added too much weight to the shuttle at launch and was very expensive. There was also some concern on how effective an escape system would be if an accident occurred during launch while all of the engines were ignited. Thus, the Space Shuttle Program became the first U.S. manned spacecraft without a launch escape system for the crew.

In 1973, NASA went out for competitive bidding for the solid rocket boosters. The competitors were Morton-Thiokol, Inc. (MTI) (henceforth called Thiokol), Aerojet General, Lockheed, and United Technologies. The contract was eventually awarded to Thiokol because of its low cost, $100 million lower than the nearest competitor. Some believed that other competitors, who ranked higher in technical design and safety, should have been given the contract. NASA believed that Thiokol-built solid rocket motors would provide the lowest cost per flight.

THE SOLID ROCKET BOOSTERS

Thiokol’s solid rocket boosters had a height of approximately 150 feet and a diameter of 12 feet. The empty weight of each booster was 192,000 pounds and the full weight was 1,300,000 pounds. Once ignited, each booster provided 2.65 million pounds of thrust, which is more than 70 percent of the thrust needed to lift off the launch pad.

Thiokol’s design for the boosters was criticized by some of the competitors, and even by some NASA personnel. The boosters were to be manufactured in four segments and then shipped from Utah to the launch site, where the segments would be assembled into a single unit. The Thiokol design was largely based upon the segmented design of the Titan III solid rocket motor produced by United Technologies in the 1950s for Air Force satellite programs. Satellite programs were unmanned efforts.

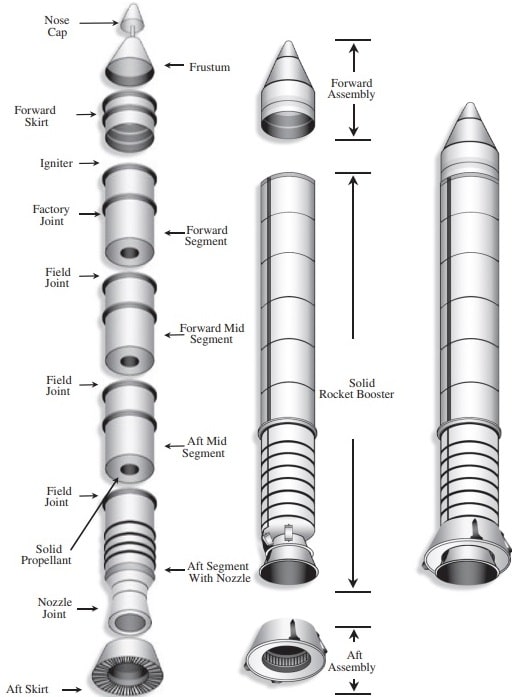

The four solid rocket sections made up the case of the booster, which essentially encased the rocket fuel and directed the flow of the exhaust gases. This is shown in Exhibit I. The cylindrical shell of the case is protected from the propellant by a layer of insulation. The mating sections of the field joint are called the tang and the clevis. One hundred and seventy-seven pins spaced around the circumference of each joint hold the tang and the clevis together. The joint is sealed in three ways. First, zinc chromate putty is placed in the gap between the mating segments and their insulation. This putty protects the second and third seals, which are rubber-like rings, called O-rings. The first O-ring is called the primary

The Solid Rocket Boosters

Kurt Hoover and Wallace T. Fowler (The University of Texas at Austin and The Texas Space Grant Consortium), “Studies in Ethics, Safety and Liability for Engineers” (Web site: http://www.tsgc.utexas.edu/archive/general/ethics/shuttle.html page 2).

The terms solid rocket booster (SRB) and solid rocket motor (SRM) will be used interchangeably.

Exhibit I. Solid rocket booster (SRB)

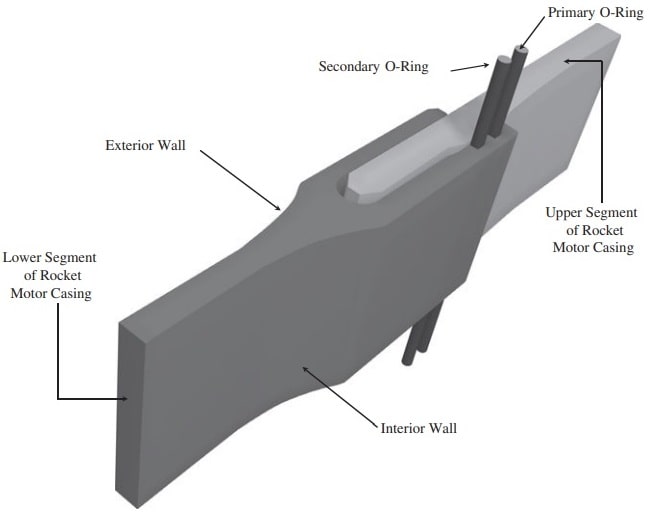

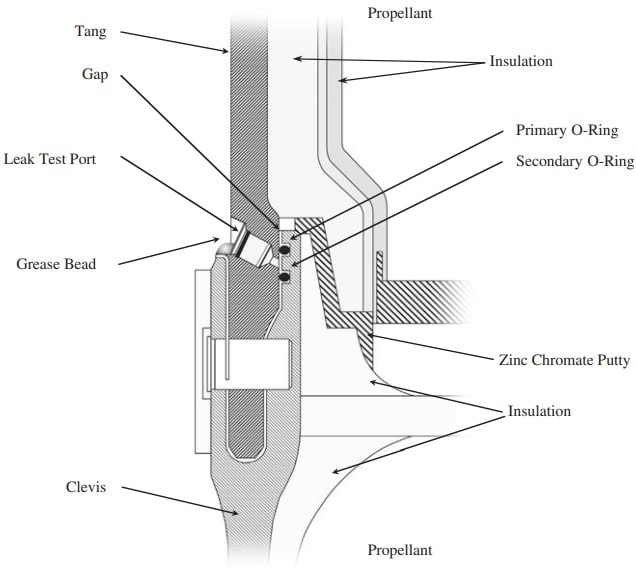

O-ring and is lodged in the gap between the tang and the clevis. The last seal is called the secondary O-ring, which is identical to the primary O-ring except it is positioned further downstream in the gap. Each O-ring is 0.280 inches in diameter. The placement of each O-ring can be seen in Exhibit II. Another component of the field joint is called the leak check port, which is shown in Exhibit III. The leak check port is designed to allow technicians to check the status of the two O-ring seals. Pressurized air is inserted through the leak check port into the gap

Exhibit II. Location of the O-rings

between the two O-rings. If the O-rings maintain the pressure, and do not let the pressurized air past the seal, the technicians know the seal is operating properly.

In the Titan III assembly process, the joints between the segmented sections contained one O-ring. Thiokol’s design had two O-rings instead of one. The second O-ring was initially considered as redundant, but included to improve safety. The purpose of the O-rings was to seal the space in the joints such that the hot exhaust gases could not escape and damage the case of the boosters.

Both the Titan III and Shuttle O-rings were made of Viton rubber, which is an elastomeric material. For comparison, rubber is also an elastomer. The elastomeric material used is a fluoroelastomer, which is an elastomer that contains fluorine. This material was chosen because of its resistance to high temperatures and its compatibility with the surrounding materials. The Titan III O-rings were Blowholes

“The Challenger Accident: Mechanical Causes of the Challenger Accident”; University of Texas (web site:http://www.me.utexas.edu/~uer/challenger/chall2.html pages 1–2).

Exhibit III. Cross section showing the leak test port

molded in one piece, whereas the shuttle’s SRB O-rings would be manufactured in five sections and then glued together. Routinely, repairs would be necessary for inclusions and voids in the rubber received from the material suppliers.

BLOWHOLES

The primary purpose of the zinc chromate putty was to act as a thermal barrier that protected the O-rings from the hot exhaust. As mentioned before, the O-ring seals were tested using the leak check port to pressurize the gap between the seals. During the test, the secondary seal was pushed down into the same, seated position as it occupied during ignition pressurization. However, because the leak check port was between the two O-ring seals, the primary O-ring was pushed up and seated against the putty. The position of the O-rings during flight and their position during the leak check test is shown in Exhibit III.

During early flights, engineers worried that, because the putty above the primary seal could withstand high pressures, the presence of the putty would prevent the leak test from identifying problems with the primary seal. They contended that the putty would seal the gap during testing regardless of the condition of the primary seal. Since the proper operation of the primary seal was essential, engineers decided to increase the pressure used during the test to above the pressure that the putty could withstand. This would ensure that the primary O-ring was properly sealing the gap without the aid of the putty. Unfortunately, during this new procedure, the high-test pressures blew holes through the putty before the primary O-ring could seal the gap.

Since the putty was on the interior of the assembled solid rocket booster, technicians could not mend the blowholes in the putty. As a result, this procedure left small, tunneled holes in the putty. These holes would allow focused exhaust gases to contact a small segment of the primary O-ring during launch. Engineers realized that this was a problem, but decided to test the seals at the high pressure despite the formation of blowholes, rather than risking a launch with a faulty primary seal.

The purpose of the putty was to prevent the hot exhaust gases from reaching the O-rings. For the first nine successful shuttle launches, NASA and Thiokol used asbestos-bearing putty manufactured by the Fuller-O’Brien Company of San Francisco. However, because of the notoriety of products containing asbestos, and the fear of potential lawsuits, Fuller-O’Brien stopped manufacturing the putty that had served the shuttle so well. This created a problem for NASA and Thiokol.

The new putty selected came from Randolph Products of Carlstadt, New Jersey. Unfortunately, with the new putty, blowholes and O-ring erosion were becoming more common to a point where the shuttle engineers became worried. Yet the new putty was still used on the boosters. Following the Challenger disaster, testing showed that, at low temperatures, the Randolph putty became much stiffer than the Fuller-O’Brien putty and lost much of its stickiness.

O-RING EROSION

If the hot exhaust gases penetrated the putty and contacted the primary O-ring, the extreme temperatures would break down the O-ring material. Because

engineers were aware of the possibility of O-ring erosion, the joints were checked after each flight for evidence of erosion. The amount of O-ring erosion found on flights before the new high-pressure leak check procedure was around 12 percent. After the new high-pressure leak test procedure, the percentage of O-ring erosion was found to increase by 88 percent. High percentages of O-ring erosion in some cases allowed the exhaust gases to pass the primary O-ring and begin eroding the secondary O-ring. Some managers argued that some O-ring erosion was “acceptable” because the O-rings were found to seal the gap even if they were eroded by as much as one-third their original diameter. The engineers believed that the design and operation of the joints were an acceptable risk because a safety margin could be identified quantitatively. This numerical boundary would become an important precedent for future risk assessment.

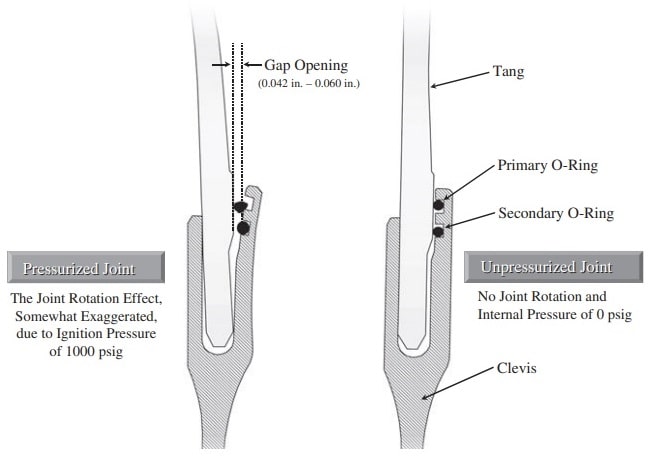

JOINT ROTATION

During ignition, the internal pressure from the burning fuel applies approximately 1000 pounds per square inch on the case wall, causing the walls to expand. Because the joints are generally stiffer than the case walls, each section tends to bulge out. The swelling of the solid rocket sections causes the tang and the clevis to become misaligned; this misalignment is called joint rotation. A diagram showing a field joint before and after joint rotation is seen in Exhibit IV. The problem with joint rotation is that it increases the gap size near the O-rings. This increase in size is extremely fast, which makes it difficult for the O-rings to follow the increasing gap and keep the seal.

Prior to ignition, the gap between the tang and the clevis is approximately 0.004 inches. At ignition, the gap will enlarge to between 0.042 and 0.060 inches, but for a maximum of 0.60 second, and then return to its original position.

O-RING RESILIENCE

The term O-ring resilience refers to the ability of the O-ring to return to its original shape after it has been deformed. This property is analogous to the ability of a rubber band to return to its original shape after it has been stretched. As with a rubber band, the resiliency of an O-ring is directly related to its temperature. As the temperature of the O-ring gets lower, the O-ring material becomes stiffer.

Exhibit IV Field joint rotation

Tests have shown that an O-ring at 75°F is five times more responsive in returning to its original shape than an O-ring at 30°F. This decrease in O-ring resiliency during a cold weather launch would make the O-ring much less likely to follow the increasing gap size during joint rotation. As a result of poor O-ring resiliency, the O-ring would not seal properly.

THE EXTERNAL TANK

The solid rockets are each joined forward and aft to the external liquid fuel tank. They are not connected to the orbiter vehicle. The solid rocket motors are mounted first, and the external liquid fuel tank is put between them and connected. Then the orbiter is mounted to the external tank at two places in the back and one place forward, and those connections carry all of the structural loads for the entire system at liftoff and through the ascent phase of flight. Also connected to the orbiter, under the orbiter’s wing, are two large propellant lines 17 inches in diameter. The one on the port side carries liquid hydrogen from the hydrogen tank in the back part of the external tank. The line on the right side carries liquid oxygen from the oxygen tank at the forward end, inside the external tank.

The external tank contains about 1.6 million pounds of propellant, or about 526,000 gallons. The orbiter’s three engines burn the liquid hydrogen and liquid oxygen at a ratio of 6:1 and at a rate equivalent to emptying out a family swimming pool every 10 seconds! Once ignited, the exhaust gases leave the orbiter’s three engines at approximately 6,000 miles per hour. After the fuel is consumed, the external tank separates from the orbiter, falls to earth, and disintegrates in the atmosphere on reentry.

THE SPARE PARTS PROBLEM

In March 1985, NASA’s administrator, James Beggs, announced that there would be one shuttle flight per month for all of fiscal year 1985. In actuality, there were only six flights. Repairs became a problem. Continuous repairs were needed on the heat tiles required for reentry, the braking system, and the main engines’ hydraulic pumps. Parts were routinely borrowed from other shuttles. The cost of spare parts was excessively high, and NASA was looking for cost containment.

RISK IDENTIFICATION PROCEDURES

The necessity for risk management was apparent right from the start. Prior to the launch of the first shuttle in April of 1981, hazards were analyzed and subjected to a formalized hazard reduction process as described in NASA Handbook, NHB5300.4. The process required that the credibility and probability of the hazards be determined. A Senior Safety Review Board was established for overseeing the risk assessment process. For the most part, the risks assessment process was qualitative. The conclusion reached was that no single hazard or combination of hazards should prevent the launch of the first shuttle as long as the aggregate risk remained acceptable.

NASA used a rather simplistic Safety (Risk) Classification System. A quantitative method for risk assessment was not in place at NASA because gathering

Exhibit V. Risk classification system

|

Level |

Description |

|

Criticality 1 (C1) |

Loss of life and/or vehicle if the component fails. |

|

Criticality 2 (C2) |

Loss of mission if the component fails. |

|

Criticality 3 (C3) |

All others. |

|

Criticality 1R (C1R) |

Redundant components exist. The failure of both could cause loss of life and/or vehicle. |

|

Criticality 2R (C2R) |

Redundant components exist. The failure of both could cause loss of mission. |

the data needed to generate statistical models would be expensive and laborintensive. If the risk identification procedures were overly complex, NASA would have been buried in paperwork due to the number of components on the space shuttle. The risk classification system selected by NASA is shown in Exhibit V.

From 1982 on, the O-ring seal was labeled Criticality 1. By 1985, there were 700 components identified as Criticality 1.

TELECONFERENCING

The Space Shuttle Program involves a vast number of people at both NASA and the contractors. Because of the geographical separation between NASA and the contractors, it became impractical to have continuous meetings. Travel between Thiokol in Utah and the Cape in Florida took one day each way. Therefore, teleconferencing became the primary method of communication and a way of life. Interface meetings were still held, but the emphasis was on teleconferencing. All locations could be linked together in one teleconference and data could be faxed back and forth as needed.

PAPERWORK CONSTRAINTS

With the rather optimistic flight schedule provided to the news media, NASA was under scrutiny and pressure to deliver. For fiscal 1986, the mission manifest called for sixteen flights. The pressure to meet schedule was about to take its toll. Safety problems had to be resolved quickly.

As the number of flights scheduled began to increase, so did the requirements for additional paperwork. The majority of the paperwork had to be completed prior to NASA’s Flight Readiness Review (FRR) meetings. Approximately one week, prior to every flight, flight operations and cargo managers were required to endorse the commitment of flight readiness to the NASA associate administrator for space flight at the FRR meeting. The responsible project/element managers would conduct pre-FRR meetings with their contractors, center managers, and the NASA Level II manager. The content of the FRR meetings included the following:

- Determine overall status, as well as establish the baseline in terms of significant changes since the last mission.

- Review significant problems resolved since the last review, and significant anomalies from the previous flight.

- Review all open items and constraints remaining to be resolved before the mission.

- Present all new waivers since the last flight.

NASA personnel were working excessive overtime, including weekends, to fulfill the paperwork requirements and prepare for the required meetings. As the number of space flights increased, so did the paperwork and overtime.

The paperwork constraints were affecting the contractors as well. Additional paperwork requirements existed for problem solving and investigations. On October 1, 1985, an interoffice memo was sent from Scott Stein, space booster project engineer at Thiokol, to Bob Lund, vice president for engineering at Thiokol, and to other selected managers concerning the O-Ring Investigation Task Force:

We are currently being hog-tied by paperwork every time we try to accomplish anything. I understand that for production programs, the paperwork is necessary. However, for a priority, short schedule investigation, it makes accomplishment of our goals in a timely manner extremely difficult, if not impossible. We need the authority to bypass some of the paperwork jungle. As a representative example of problems and time that could easily be eliminated, consider assembly or disassembly of test hardware by manufacturing personnel. . . . I know the established paperwork procedures can be violated if someone with enough authority dictates it. We did that with the DR system when the FWC hardware “Tiger Team” was established. If changes are not made to allow us to accomplish work in a reasonable amount of time, then the O-ring investigation task force will never have the potency necessary to resolve problems in a timely manner.

Both NASA and the contractors were now feeling the pressure caused by the paperwork constraints.

ISSUING WAIVERS

One quick way of reducing paperwork and meetings was to issue a waiver. Historically, a waiver was a formalized process that allowed an exception to either a rule, a specification, a technical criterion, or a risk. Waivers were ways to reduce excessive paperwork requirements. Project managers and contract administrators had the authority to issue waivers, often with the intent of bypassing standard protocols in order to maintain a schedule. The use of waivers had been in place well before the manned space program even began. What is important here was not NASA’s use of the waiver, but the justification for the waiver given the risks.

NASA had issued waivers on both Criticality 1 status designations and launch constraints. In 1982, the solid rocket boosters were designated C1 by the Marshall Space Flight Center because failure of the O-rings could have caused loss of crew and the shuttle. This meant that the secondary O-rings were not considered redundant. The SRB project manager at Marshall, Larry Malloy, issued a waiver just in time for the next shuttle launch to take place as planned. Later, the O-rings designation went from C1 to C1R (i.e., a redundant process), thus partially avoiding the need for a waiver. The waiver was a necessity to keep the shuttle flying according to the original manifest.

Having a risk identification of C1 was not regarded as a sufficient reason to cancel a launch. It simply meant that component failure could be disastrous. It implied that this might be a potential problem that needed attention. If the risks were acceptable, NASA could still launch. A more serious condition was the issuing of launch constraints. Launch constraints were official NASA designations for situations in which mission safety was a serious enough problem to justify a decision not to launch. But once again, a launch constraint did not imply that the launch should be delayed. It meant that this was an important problem and needed to be addressed.

Following the 1985 mission that showed O-ring erosion and exhaust gas blow-by, a launch constraint was imposed. Yet on each of the next five shuttle missions, NASA’s Malloy issued a launch constraint waiver allowing the flights to take place on schedule without any changes to the O-rings.

Were the waivers a violation of serious safety rules just to keep the shuttle flying? The answer is no! NASA had protocols such as policies, procedures, and rules for adherence to safety. Waivers were also protocols but for the purpose of deviating from other existing protocols. Larry Malloy, his colleagues at NASA, and the contractors had no intentions of doing evil. Waivers were simply a way of saying that we believe that the risk is an acceptable risk.

The lifting of launch constraints and the issuance of waivers became the norm—standard operating procedure. Waivers became a way of life. If waivers were issued and the mission was completed successfully, then the same waivers would exist for the next flight and did not have to be brought up for discussion at the Flight Readiness Review meeting. The justification for the waivers seemed to be the similarity between flight launch conditions, temperature, and so on. Launching under similar conditions seemed to be important for the engineers at NASA and Thiokol because it meant that the forces acting on the O-rings were within their region of experience and could be correlated to existing data. The launch temperature effect on the O-rings was considered predictable, and therefore constituted an acceptable risk to both NASA and Thiokol, thus perhaps eliminating costly program delays that would have resulted from having to redesign the O-rings. The completion of each shuttle mission added another data point to the region of experience, thus guaranteeing the same waivers on the next launch. Flying with acceptable risk became the norm in NASA’s culture.

LAUNCH LIFTOFF SEQUENCE PROFILE: POSSIBLE ABORTS

During the countdown to liftoff, the launch team closely monitors weather conditions, not only at the launch site, but also at touchdown sites should the mission need to be prematurely aborted.

Dr. Feynman: “Would you explain why we are so sensitive to the weather?”

Mr. Moore (NASA’s deputy administrator for space flight): “Yes, there are several reasons. I mentioned the return to the landing site. We need to have visibility if we get into a situation where we need to return to the landing site after launch, and the pilots and the commanders need to be able to see the runway and so forth. So, you need a ceiling limitation on it [i.e., weather].

“We also need to maintain specifications on wind velocity so we don’t exceed crosswinds. Landing on a runway and getting too high of a crosswind may cause us to deviate off of the runway and so forth, so we have a crosswind limit. During ascent, assuming a normal flight, a chief concern is damage to tiles due to rain. We have had experiences in seeing what the effects of a brief shower can do in terms of the tiles. The tiles are thermal insulation blocks, very thick. A lot of them are very thick on the bottom of the orbiter. But if you have a raindrop and you are going at a very high velocity, it tends to erode the tiles, pock the tiles, and that causes us a grave concern regarding the thermal protection.

“In addition to that, you are worried about the turnaround time of the orbiters as well, because with the kind of tile damage that one could get in rain, you have an awful lot of work to do to go back and replace tiles back on the system. So, there are a number of concerns that weather enters into, and it is a major factor in our assessment of whether or not we are ready to launch.”

Approximately six to seven seconds prior to the liftoff, the Shuttle’s main engines (liquid fuel) ignite. These engines consume one-half million gallons of liquid fuel. It takes nine hours prior to launch to fill the liquid fuel tanks. At ignition, the engines are throttled up to 104 percent of rated power. Redundancy checks on the engines’ systems are then made. The launch site ground complex and the orbiter’s onboard computer complex check a large number of details and parameters about the main engines to make sure that everything is proper and that the main engines are performing as planned.

If a malfunction is detected, the system automatically goes into a shutdown sequence, and the mission is scrubbed. The primary concern at this point is to make the vehicle “safe.” The crew remains on board and performs a number of functions to get the vehicle into a safe mode. These functions include making sure that all propellant and electrical systems are properly safed. Ground crews at the launch pad begin servicing the launch pad. Once the launch pad is in a safe condition, the hazard and safety teams begin draining the remaining liquid fuel out of the external tank.

If no malfunction is detected during this six-second period of liquid fuel burn, then a signal is sent to ignite the two solid rocket boosters, and liftoff occurs. For the next two minutes, with all engines ignited, the shuttle goes through a Max Q, or high dynamic pressure phase, that exerts maximum pressure loads on the orbiter vehicle. Based upon the launch profile, the main engines may be throttled down slightly during the Max Q phase to lower the loads.

After 128 seconds into the launch sequence, all of the solid fuel is expended and the solid rocket boosters (SRBs) staging occurs. The SRB parachutes are deployed. The SRBs then fall back to earth 162 miles from the launch site and are recovered for examination, cleaning, and reuse on future missions. The main liquid fuel engines are then throttled up to maximum power. After 523 seconds into the liftoff, the external liquid fuel tanks are essentially expended of fuel. The main engines are shut down. Ten to eighteen seconds later, the external tank is separated from the orbiter and disintegrates on reentry into the atmosphere.

From a safety perspective, the most hazardous period is the first 128 seconds when the SRBs are ignited. Here’s what Arnold Aldrich, manager of NASA’s STS Program, Johnson Space Center, had to say:

Mr. Aldrich: “Once the shuttle system starts off the launch pad, there is no capability in the system to separate these [solid propellant] rockets until they reach burnout. They will burn for two minutes and eight or nine seconds, and the system must stay together. There is not a capability built into the vehicle that would allow these to separate. There is a capability available to the flight crew to separate at this interface the orbiter from the tank, but that is thought to be unacceptable during the first stage when the booster rockets are on and thrusting. So, essentially the first two minutes and a little more of flight, the stack is intended and designed to stay together, and it must stay together to fly successfully.”

Launch Liftoff Sequence Profile: Possible Aborts

Exhibit VI. Abort options for shuttle

|

Type of Abort |

Landing Site |

|

Once-around abort |

Edwards Air Force Base |

|

Trans-Atlantic abort |

DaKar |

|

Trans-Atlantic abort |

Casablanca |

|

Return-to-landing-site (RTLS) |

Kennedy Space Center |

Mr. Hotz: “Mr. Aldrich, why is it unacceptable to separate the orbiter at that stage?”

Mr. Aldrich: “It is unacceptable because of the separation dynamics and the rupture of the propellant lines. You cannot perform the kind of a clean separation required for safety in the proximity of these vehicles at the velocities and the thrust levels they are undergoing, [and] the atmosphere they are flying through. In that regime, it is the design characteristic of the total system.”

If an abort is deemed necessary during the first 128 seconds, the actual abort will not begin until after SRB staging has occurred, which is after 128 seconds into the launch sequence. Based on the reason and timing of an abort, options include those listed in Exhibit VI.

Arnold Aldrich commented on different abort profiles:

Chairman Rogers: “During the two-minute period, is it possible to abort through the orbiter?”

Mr. Aldrich: “You can abort for certain conditions. You can start an abort, but the vehicle won’t do anything yet, and the intended aborts are built around failures in the main engine system, the liquid propellant systems and their controls. If you have a failure of a main engine, it is well detected by the crew and by the ground support, and you can call for a return-to-launch-site abort. That would be logged in the computer. The computer would be set up to execute it, but everything waits until the solids take you to altitude. At that time, the solids will separate in the sequence I described, and then the vehicle flies downrange some 400 miles, maybe 10 to 15 additional minutes, while all of the tank propellant is expelled through these engines.

“As a precursor to setting up the conditions for this return-to-launch-site abort to be successful towards the end of that burn downrange, using the propellants and the thrust of the main engines, the vehicle turns and actually points heads up back towards Florida. When the tank is essentially depleted, automatic signals are sent to close off the [liquid] propellant lines and to separate the orbiter, and the orbiter then does a similar approach to the one we are familiar with with orbit back to the Kennedy Space Center for approach and landing.”

Dr. Walker: “So, the propellant is expelled but not burned?”

Mr. Aldrich: “No, it is burned. You burn the system on two engines all the way down-range until it is gone, and then you turn around and come back because you don’t have enough to burn to orbit. That is the return-to-launch-site abort, and it applies during the first 240 seconds of—no, 240 is not right. It is longer than that—the first four minutes, either before or after separation you can set that abort up, but it will occur after the solids separate, and if you have a main engine anomaly after the solids separate, at that time you can start the RTLS, and it will go through that same sequence and come back.”

Dr. Ride: “And you can also only do an RTLS if you have lost just one main engine. So if you lose all three main engines, RTLS isn’t a viable abort mode.”

Mr. Aldrich: “Once you get through the four minutes, there’s a period where you now don’t have the energy conditions right to come back, and you have a forward abort, and Jesse mentioned the sites in Spain and on the coast of Africa. We have what is called a trans-Atlantic abort, and where you can use a very similar sequence to the one I just described. You still separate the solids, you still burn all the propellant out of the tanks, but you fly across and land across the ocean.”

Mr. Hotz: “Mr. Aldrich, could you recapitulate just a bit here? Is what you are telling us that for two minutes of flight, until the solids separate, there is no practical abort mode?” Mr. Aldrich: “Yes, sir.”

Mr. Hotz: “Thank you.”

Mr. Aldrich: “A trans-Atlantic abort can cover a range of just a few seconds up to about a minute in the middle where the across-the-ocean sites are effective, and then you reach this abort once-around capability where you go all the way around and land in California or back to Kennedy by going around the earth. And finally, you have abort-to-orbit where you have enough propulsion to make orbit but not enough to achieve the exact orbital parameters that you desire. That is the way that the abort profiles are executed.

“There are many, many nuances of crew procedure and different conditions and combinations of sequences of failures that make it much more complicated than I have described it.”

THE O-RING PROBLEM

There were two kinds of joints on the shuttle—field joints that were assembled at the launch site connecting together the SRB’s cylindrical cases, and nozzle joints that connected the aft end of the case to the nozzle. During the pressure of ignition, the field joints could become bent such that the secondary O-ring could lose contact within an estimated 0.17 to 0.33 seconds after ignition. If the primary O-ring failed to seal properly before the gap within the joints opened up and the secondary seal failed, the results could be disastrous.

When the solid propellant boosters are recovered after separation, they are disassembled and checked for damage. The O-rings could show evidence of coming into contact with heat. Hot gases from the ignition sequence could blow by the primary O-ring briefly before sealing. This “blow-by” phenomenon could last for only a few milliseconds before sealing and result in no heat damage to the O-ring. If the actual sealing process takes longer than expected, then charring and erosion of the O-rings can occur. This would be evidenced by gray or black soot and erosion to the O-rings. The terms used are impingement erosion and “bypass” erosion, with the latter identified also as sooted “blow-by.”

Roger Boisjoly of Thiokol describes blow-by erosion and joint rotation as follows:

O-ring material gets removed from the cross section of the O-ring much, much faster than when you have bypass erosion or blow-by, as people have been terming it. We usually use the characteristic blow-by to define gas past it, and we use the other term [bypass erosion] to indicate that we are eroding at the same time. And so you can have blow-by without erosion, [and] you [can] have blow-by with erosion.

At the beginning of the transient cycle [initial ignition rotation, up to 0.17 seconds] . . . [the primary O-ring] is still being attacked by hot gas, and it is eroding at the same time it is trying to seal, and it is a race between, will it erode more than the time allowed to have it seal.

On January 24, 1985, STS 51-C [Flight No. 15] was launched at 51°F, which was the lowest temperature of any launch up to that time. Analyses of the joints showed evidence of damage. Black soot appeared between the primary and secondary Orings. The engineers concluded that the cold weather had caused the O-rings to harden and move more slowly. This allowed the hot gases to blow by and erode the O-rings.

This scorching effect indicated that low temperature launches could be disastrous.

On July 31, 1985, Roger Boisjoly of Thiokol sent an interoffice memo to R. K. Lund, vice president for engineering at Thiokol:

This letter is written to insure that management is fully aware of the seriousness of the current O-ring erosion problem in the SRM joints from an engineering standpoint.

The mistakenly accepted position on the joint problem was to fly without fear of failure and to run a series of design evaluations which would ultimately lead to a solution or at least a significant reduction of the erosion problem. This position is now drastically changed as a result of the SRM 16A nozzle joint erosion which eroded a secondary O-ring with the primary O-ring never sealing.

If the same scenario should occur in a field joint (and it could), then it is a jump ball as to the success or failure of the joint because the secondary O-ring cannot respond to the clevis opening rate and may not be capable of pressurization. The result would be a catastrophe of the highest order—loss of human life.

An unofficial team (a memo defining the team and its purpose was never published) with [a] leader was formed on 19 July 1985 and was tasked with solving the problem for both the short and long term. This unofficial team is essentially nonexistent at this time. In my opinion, the team must be officially given the responsibility and the authority to execute the work that needs to be done on a non-interference basis (full time assignment until completed).

It is my honest and very real fear that if we do not take immediate action to dedicate a team to solve the problem with the field joint having the number one priority, then we stand in jeopardy of losing a flight along with all the launch pad facilities.

On August 9, 1985, a letter was sent from Brian Russell, manager of the SRM Ignition System, to James Thomas at the Marshall Space Flight Center. The memo addressed the following:

Per your request, this letter contains the answers to the two questions you asked at the July Problem Review Board telecon.

- Question: If the field joint secondary seal lifts off the metal mating surfaces during motor pressurization, how soon will it return to a position where contact is re-established?

Answer: Bench test data indicate that the O-ring resiliency (its capability to follow the metal) is a function of temperature and rate of case expansion. MTI [Thiokol] measured the force of the O-ring against Instron plattens, which simulated the nominal squeeze on the O-ring and approximated the case expansion distance and rate.

At 100°F, the O-ring maintained contact. At 75°F, the O-ring lost contact for 2.4 seconds. At 50°F, the O-ring did not re-establish contact in 10 minutes at which time the test was terminated.

The conclusion is that secondary sealing capability in the SRM field joint cannot be guaranteed.

- Question: If the primary O-ring does not seal, will the secondary seal seat in sufficient time to prevent joint leakage?

Answer: MTI has no reason to suspect that the primary seal would ever fail after pressure equilibrium is reached; i.e., after the ignition transient. If the primary O-ring were to fail from 0 to 170 milliseconds, there is a very high probability that the secondary O-ring would hold pressure since the case has not expanded appreciably at this point. If the primary seal were to fail from 170 to 330 milliseconds, the probability of the secondary seal holding is reduced. From 330 to 600 milliseconds the chance of the secondary seal holding is small. This is a direct result of the O-ring’s slow response compared to the metal case segments as the joint rotates.

At NASA, the concern for a solution to the O-ring problem became not only a technical crisis, but also a budgetary crisis. In a July 23, 1985, memorandum from Richard Cook, program analyst, to Michael Mann, chief of the STS Resource Analysis Branch, the impact of the problem was noted:

Earlier this week you asked me to investigate reported problems with the charring of seals between SRB motor segments during flight operations. Discussions with program engineers show this to be a potentially major problem affecting both flight safety and program costs.

Presently three seals between SRB segments use double O-rings sealed with putty. In recent Shuttle flights, charring of these rings has occurred. The O-rings are designed so that if one fails, the other will hold against the pressure of firing. However, at least in the joint between the nozzle and the aft segment, not only has the first O-ring been destroyed, but the second has been partially eaten away.

Engineers have not yet determined the cause of the problem. Candidates include the use of a new type of putty (the putty formerly in use was removed from the market by EPA because it contained asbestos), failure of the second ring to slip into the groove which must engage it for it to work properly, or new, and as yet unidentified, assembly procedures at Thiokol. MSC is trying to identify the cause of the problem, including on-site investigation at Thiokol, and OSF hopes to have some results from their analysis within thirty days. There is little question, however, that flight safety has been and is still being compromised by potential failure of the seals, and it is acknowledged that failure during launch would certainly be catastrophic. There is also indication that staff personnel knew of this problem sometime in advance of management’s becoming apprised of what was going on.

The potential impact of the problem depends on the as yet undiscovered cause. If the cause is minor, there should be little or no impact on budget or flight rate. A worst case scenario, however, would lead to the suspension of Shuttle flights, redesign of the SRB, and scrapping of existing stockpiled hardware. The impact on the FY 1987-8 budget could be immense.

It should be pointed out that Code M management [NASA’s associate administrator for space flight] is viewing the situation with the utmost seriousness. From a budgetary standpoint, I would think that any NASA budget submitted this year for FY 1987 and beyond should certainly be based on a reliable judgment as to the cause of the SRB seal problem and a corresponding decision as to budgetary action needed to provide for its solution.

On October 30, 1985, NASA launched Flight STS 61-A [Flight no. 22] at 75°F. This flight also showed signs of sooted blow-by, but the color was significantly blacker. Although there was some heat effect, there was no measurable erosion observed on the secondary O-ring. Since blow-by and erosion had now occurred at a higher launch temperature, the original premise that launches under cold temperatures were a problem was now being questioned. Exhibit VII shows the temperature at launch of all the shuttle flights up to this time and the O-ring damage, if any.

Management at both NASA and Thiokol wanted concrete evidence that launch temperature was directly correlated to blow-by and erosion. Other than simply a “gut feel,” engineers were now stymied on how to show the direct correlation. NASA was not ready to cancel a launch simply due to an engineer’s “gut feel.”

William Lucas, director of the Marshall Space Center, made it clear that NASA’s manifest for launches would be adhered to. Managers at NASA were pressured to resolve problems internally rather than to escalate them up the chain of command. Managers became afraid to inform anyone higher up that they had problems, even though they knew that one existed.

Richard Feynman, Nobel laureate and member of the Rogers Commission, concluded that a NASA official altered the safety criteria so that flights could be certified on time under pressure imposed by the leadership of William Lucas. Feynman commented:

. . . They, therefore, fly in a relatively unsafe condition with a chance of failure of the order of one percent. Official management claims to believe that the probability of failure is a thousand times less.

Without concrete evidence of the temperature effect on the O-rings, the secondary O-ring was regarded as a redundant safety constraint and the criticality factor was changed from C1 to C1R. Potentially serious problems were treated as anomalies peculiar to a given flight. Under the guise of anomalies, NASA began

Exhibit VII. Erosion and blow-by history (temperature in ascending order from coldest to warmest)

|

Temperature |

Erosion |

Blow-by | |||

|

Flight |

Date |

(F) |

Incidents |

Incidents |

Comments |

|

51-C |

01/24/85 |

53 |

3 |

2 |

Most erosion any flight; blow-by; secondary O-rings heated up |

|

41-B |

02/03/84 |

57 |

1 |

Deep, extensive erosion | |

|

61-C |

01/12/86 |

58 |

1 |

O-rings erosion | |

|

41-C |

04/06/84 |

63 |

1 |

O-rings heated but no damage | |

|

1 |

04/12/81 |

66 |

Coolest launch without problems | ||

|

6 |

04/04/83 |

67 | |||

|

51-A |

11/08/84 |

67 | |||

|

51-D |

04/12/85 |

67 | |||

|

5 |

11/11/82 |

68 | |||

|

3 |

03/22/82 |

69 | |||

|

2 |

11/12/81 |

70 |

1 |

Extent of erosion unknown | |

|

9 |

11/28/83 |

70 | |||

|

41-D |

08/30/84 |

70 |

1 | ||

|

51-G |

06/17/85 |

70 | |||

|

7 |

06/18/83 |

72 | |||

|

8 |

08/30/83 |

73 | |||

|

51-B |

04/29/85 |

75 | |||

|

61-A |

10/20/85 |

75 |

2 |

No erosion but soot between O-rings | |

|

51-1 |

08/27/85 |

76 | |||

|

61 |

11/26/85 |

76 | |||

|

41-G |

10/05/84 |

78 | |||

|

51-J |

10/03/85 |

79 | |||

|

4 |

06/27/82 |

80 |

No data; casing lost at sea | ||

|

51-F |

07/29/85 |

81 |

issuing waivers to maintain the flight schedules. Pressure was placed upon contractors to issue closure reports. On December 24, 1985, L. O. Wear, NASA’s SRM Program Office manager, sent a letter to Joe Kilminster, Thiokol’s vice president for the Space Booster Program:

During a recent review of the SRM Problem Review Board open problem list I found that we have 20 open problems, 11 opened during the past 6 months, 13 open over 6 months, 1 three years old, 2 two years old, and 1 closed during the past six months. As you can see our closure record is very poor. You are requested to initiate the required effort to assure more timely closures and the MTI personnel shall coordinate directly with the S&E personnel the contents of the closure reports.

PRESSURE, PAPERWORK, AND WAIVERS

To maintain the flight schedule, critical issues such as launch constraints had to be resolved or waived. This would require extensive documentation. During the Rogers Commission investigation, it seemed that there had been a total lack of coordination between NASA’s Marshall Space Center and Thiokol prior to the Challenger disaster. Joe Kilminster, Thiokol’s vice president for the Space Booster Program, testified:

Mr. Kilminster: “Mr. Chairman, if I could, I would like to respond to that. In response to the concern that was expressed—and I had discussions with the team leader, the task force team leader, Mr. Don Kettner, and Mr. Russell and Mr. Ebeling. We held a meeting in my office and that was done in the October time period where we called the people who were in a support role to the task team, as well as the task force members themselves.

“In that discussion, some of the task force members were looking to circumvent some of our established systems. In some cases, that was acceptable; in other cases, it was not. For example, some of the work that they had recommended to be done was involved with full-scale hardware, putting some of these joints together with various putty layup configurations; for instance, taking them apart and finding out what we could from that inspection process.”

Dr. Sutter: “Was that one of these things that was outside of the normal work, or was that accepted as a good idea or a bad idea?”

Mr. Kilminster: “A good idea, but outside the normal work, if you will.”

Dr. Sutter: “Why not do it?”

Mr. Kilminster: “Well, we were doing it. But the question was, can we circumvent the system, the paper system that requires, for instance, the handling constraints on those flight hardware items? And I said no, we can’t do that. We have to maintain our handling system, for instance, so that we don’t stand the possibility of injuring or damaging a piece of flight hardware.

“I asked at that time if adding some more people, for instance, a safety engineer—that was one of the things we discussed in there. The consensus was no, we really didn’t need a safety engineer. We had the manufacturing engineer in attendance who was in support of that role, and I persuaded him that, typical of the way we normally worked, that he should be calling on the resources from his own organization, that is, in Manufacturing, in order to get this work done and get it done in a timely fashion.

“And I also suggested that if they ran across a problem in doing that, they should bubble that up in their management chain to get help in getting the resources to get that done. Now, after that session, it was my impression that there

Pressure, Paperwork, and Waivers

was improvement based on some of the concerns that had been expressed, and we did get quite a bit of work done. For your evaluation, I would like to talk a little bit about the sequence of events for this task force.”

Chairman Rogers: “Can I interrupt? Did you know at that time it was a launch constraint, a formal launch constraint?”

Mr. Kilminster: “Not an overall launch constraint as such. Similar to the words that have been said before, each Flight Readiness Review had to address any anomalies or concerns that were identified at previous launches and in that sense, each of those anomalies or concerns were established in my mind as launch constraints unless they were properly reviewed and agreed upon by all parties.”

Chairman Rogers: “You didn’t know there was a difference between the launch constraint and just considering it an anomaly? You thought they were the same thing?”

Mr. Kilminster: “No, sir. I did not think they were the same thing.”

Chairman Rogers: “My question is: Did you know that this launch constraint was placed on the flights in July 1985?”

Mr. Kilminster: “Until we resolved the O-ring problem on that nozzle joint, yes. We had to resolve that in a fashion for the subsequent flight before we would be okay to fly again.”

Chairman Rogers: “So you did know there was a constraint on that?”

Mr. Kilminster: “On a one flight per one flight basis; yes, sir.”

Chairman Rogers: “What else would a constraint mean?”

Mr. Kilminster: “Well, I get the feeling that there’s a perception here that a launch constraint means all launches, whereas we were addressing each launch through the Flight Readiness Review process as we went.”

Chairman Rogers: “No, I don’t think—the testimony that we’ve had is that a launch constraint is put on because it is a very serious problem and the constraint means don’t fly unless it’s fixed or taken care of, but somebody has the authority to waive it for a particular flight. And in this case, Mr. Mulloy was authorized to waive it, which he did, for a number of flights before 51-L. Just prior to 51-L, the papers showed the launch constraint was closed out, which I guess means no longer existed. And that was done on January 23, 1986. Now, did you know that sequence of events?”

Mr. Kilminster: “Again, my understanding of closing out, as the term has been used here, was to close it out on the problem actions list, but not as an overall standard requirement. We had to address these at subsequent Flight Readiness Reviews to ensure that we were all satisfied with the proceeding to launch.” Chairman Rogers: “Did you understand the waiver process, that once a constraint was placed on this kind of a problem, that a flight could not occur unless there was a formal waiver?”

Mr. Kilminster: “Not in the sense of a formal waiver, no, sir.”

Chairman Rogers: “Did any of you? Didn’t you get the documents saying that?”

Mr. McDonald: “I don’t recall seeing any documents for a formal waiver.”

MISSION 51-L

On January 25, 1986, questionable weather caused a delay of Mission 51-L to January 27. On January 26, the launch was reconfirmed for 9:37 A.M. on the 27th. However, on the morning of January 27, a malfunction with the hatch, combined with high crosswinds, caused another delay. All preliminary procedures had been completed and the crew had just boarded when the first problem appeared. A microsensor on the hatch indicated that the hatch was not shut securely. It turned out that the hatch was shut securely but the sensor had malfunctioned. Valuable time was lost in determining the problem.

After the hatch was finally closed, the external handle could not be removed. The threads on the connecting bolt were stripped and instead of cleanly disengaging when turned, simply spun around. Attempts to use a portable drill to remove the handle failed. Technicians on the scene asked Mission Control for permission to saw off the bolt. Fearing some form of structural stress to the hatch, engineers made numerous time-consuming calculations before giving the go-ahead to cut off the bolt. The entire process consumed almost two hours before the countdown resumed.

However, the misfortunes continued. During the attempts to verify the integrity of the hatch and remove the handle, the wind had been steadily rising. Chief Astronaut John Young flew a series of approaches in the shuttle training aircraft and confirmed the worst fears of mission control. The crosswinds at the Cape were in excess of the level allowed for the abort contingency. The opportunity had been missed. The mission was then reset to launch the next day, January 28, at 9:38 A.M. Everyone was quite discouraged since extremely cold weather was forecast for Tuesday that could further postpone the launch.

Weather conditions indicated that the temperature at launch could be as low as 26°F. This would be much colder and well below the temperature range that the O-rings were designed to operate in. The components of the solid rocket motors were qualified only to 40°F at the lower limit. Undoubtedly, when the sun

Mission 51-L

came up and launch time approached, both the air temperature and vehicle would warm up, but there was still concern. Would the ambient temperature be high enough to meet the launch requirements? NASA’s Launch Commit Criteria stated that no launch should occur at temperatures below 31°F. There were also worries over any permanent effects on the shuttle due to the cold overnight temperatures. NASA became concerned and asked Thiokol for their recommendation on whether or not to launch. NASA admitted under testimony that if Thiokol had recommended not launching, then the launch would not have taken place.

At 5:45 P.M. eastern standard time, a teleconference was held between the Kennedy Space Center, Marshall Space Flight Center, and Thiokol. Bob Lund, vice president for engineering, summarized the concerns of the Thiokol engineers that in Thiokol’s opinion, the launch should be delayed until noontime or even later such that a launch temperature of at least 53°F could be achieved. Thiokol’s engineers were concerned that no data were available for launches at this temperature of 26°F. This was the first time in fourteen years that Thiokol had recommended not to launch.

The design validation tests originally done by Thiokol covered only a narrow temperature range. The temperature data did not include any temperatures below 53°F. The O-rings from Flight 51-C, which had been launched under cold conditions the previous year, showed very significant erosion. These were the only data available on the effects of cold, but all of the Thiokol engineers agreed that the cold weather would decrease the elasticity of the synthetic rubber O-rings, which in turn might cause them to seal slowly and allow hot gases to surge through the joint.

Another teleconference was set up for 8:45 P.M. to invite more parties to be involved in the decision. Meanwhile, Thiokol was asked to fax all relevant and supporting charts to all parties involved in the 8:45 P.M. teleconference.

The following information was included in the pages that were faxed:

Blow-by History:

SRM-15 Worst Blow-by

- Two case joints (80°), (110°) Arc

- Much worse visually than SRM-22

SRM-22 Blow-by

- Two case joints (30–40°)

SRM-13A, 15, 16A, 18, 23A, 24A

- Nozzle blow-by

Field Joint Primary Concerns—SRM-25

- A temperature lower than the current database results in changing primary O-ring sealing timing function

- SRM-15A—80° arc black grease between O-rings

SRM-15B—110° arc black grease between O-rings

- Lower O-ring squeeze due to lower temp

- Higher O-ring shore hardness

- Thicker grease viscosity

- Higher O-ring pressure activation time

- If actuation time increases, threshold of secondary seal pressurization capability is approached.

- If threshold is reached then secondary seal may not be capable of being pressurized.

Conclusions:

- Temperature of O-ring is not only parameter controlling blow-by:

SRM-15 with blow-by had an O-ring temp at 53°F.

SRM-22 with blow-by had an O-ring temp at 75°F.

Four development motors with no blow-by were tested at O-ring temp of 47° to 52°F.

Development motors had putty packing which resulted in better performance.

- At about 50°F blow-by could be experienced in case joints.

- Temp for SRM-25 on 1-28-86 launch will be: 29°F 9 A.M. 38°F 2 P.M.

- Have no data that would indicate SRM-25 is different than SRM-15 other than temp.

Recommendations:

- O-ring temp must be ≥ 53°F at launch.

Development motors at 47° to 52°F with putty packing had no blow-by.

SRM-15 (the best simulation) worked at 53°F.

- Project ambient conditions (temp & wind) to determine launch time.

From NASA’s perspective, the launch window was from 9:30 A.M. to 12:30 P.M. on January 28. This was based on weather conditions and visibility, not only at the launch site but also at the landing sites should an abort be necessary. An additional consideration was the fact that the temperature might not reach 53°F prior to the launch window closing. Actually, the temperature at the Kennedy Space Center was not expected to reach 50°F until two days later. NASA was hoping that Thiokol would change its mind and recommend launch.

THE SECOND TELECONFERENCE

At the second teleconference, Bob Lund once again asserted Thiokol’s recommendation not to launch below 53°F. NASA’s Mulloy then burst out over the teleconference network:

My God, Morton Thiokol! When do you want me to launch—next April?

NASA challenged Thiokol’s interpretation of the data and argued that Thiokol was inappropriately attempting to establish a new Launch Commit Criterion just prior to launch. NASA asked Thiokol to reevaluate its conclusions. Crediting NASA’s comments with some validity, Thiokol then requested a fiveminute off-line caucus. In the room at Thiokol were fourteen engineers, namely:

- Jerald Mason, senior vice president, Wasatch Operations

- Calvin Wiggins, vice president and general manager, Space Division

- Joe C. Kilminster, vice president, Space Booster Programs

- Robert K. Lund, vice president, Engineering

- Larry H. Sayer, director, Engineering and Design

- William Macbeth, manager, Case Projects, Space Booster Project

- Donald M. Ketner, supervisor, Gas Dynamics Section and head Seal Task Force

- Roger Boisjoly, member, Seal Task Force

- Arnold R. Thompson, supervisor, Rocket Motor Cases

- Jack R. Kapp, manager, Applied Mechanics Department

- Jerry Burn, associate engineer, Applied Mechanics

- Joel Maw, associate scientist, Heat Transfer Section

- Brian Russell, manager, Special Projects, SRM Project

- Robert Ebeling, manager, Ignition System and Final Assembly, SRB Project

There were no safety personnel in the room because nobody thought to invite them. The caucus lasted some thirty minutes. Thiokol (specifically Joe Kilminster) then returned to the teleconference stating that they were unable to sustain a valid argument that temperature affects O-ring blow-by and erosion. Thiokol then reversed its position and was now recommending launch.

NASA stated that the launch of the Challenger would not take place without Thiokol’s approval. But when Thiokol reversed its position following the caucus and agreed to launch, NASA interpreted this as an acceptable risk. The launch would now take place.

Mr. McDonald (Thiokol): “The assessment of the data was that the data was not totally conclusive, that the temperature could affect everything relative to the seal. But there was data that indicated that there were things going in the wrong direction, and this was far from our experience base.

“The conclusion being that Thiokol was directed to reassess all the data because the recommendation was not considered acceptable at that time of [waiting for] the 53 degrees [to occur]. NASA asked us for a reassessment and some more data to show that the temperature in itself can cause this to be a more serious concern than we had said it would be. At that time Thiokol in Utah said that they would like to go off-line and caucus for about five minutes and reassess what data they had there or any other additional data.

“And that caucus lasted for, I think, a half hour before they were ready to go back on. When they came back on they said they had reassessed all the data and had come to the conclusions that the temperature influence, based on the data they had available to them, was inconclusive and therefore they recommended a launch.”

During the Rogers Commission testimony, NASA’s Mulloy stated his thought process in requesting Thiokol to rethink their position:

General Kutyna: “You said the temperature had little effect?”

Mr. Mulloy: “I didn’t say that. I said I can’t get a correlation between O-ring erosion, blow-by and O-ring, and temperature.”

General Kutyna: “51-C was a pretty cool launch. That was January of last year.”

Mr. Mulloy: “It was cold before then but it was not that much colder than other launches.”

General Kutyna: “So it didn’t approximate this particular one?”

Mr. Mulloy: “Unfortunately, that is one you look at and say, aha, is it related to a temperature gradient and the cold. The temperature of the O-ring on 51-C, I believe, was 53 degrees. We have fired motors at 48 degrees.”

Mulloy asserted he had not pressured Thiokol into changing their position. Yet, the testimony of Thiokol’s engineers stated they believed they were being pressured.

Roger Boisjoly, one of Thiokol’s experts on O-rings, was present during the caucus and vehemently opposed the launch. During testimony, Boisjoly described his impressions of what occurred during the caucus:

“The caucus was started by Mr. Mason stating that a management decision was necessary. Those of us who were opposed to the launch continued to speak out, and I am specifically speaking of Mr. Thompson and myself because in my recollection, he and I were the only ones who vigorously continued to oppose the launch. And we were attempting to go back and rereview and try to make clear what we were trying to get across, and we couldn’t understand why it was going to be reversed.

The Second Teleconference

“So, we spoke out and tried to explain again the effects of low temperature. Arnie actually got up from his position which was down the table and walked up the table and put a quad pad down in front of the table, in front of the management folks, and tried to sketch out once again what his concern was with the joint, and when he realized he wasn’t getting through, he just stopped.

“I tried one more time with the photos. I grabbed the photos and I went up and discussed the photos once again and tried to make the point that it was my opinion from actual observations that temperature was indeed a discriminator, and we should not ignore the physical evidence that we had observed.

“And again, I brought up the point that SRM-15 had a 110 degree arc of black grease, while SRM-22 had a relatively different amount, which was less and wasn’t quite as black. I also stopped when it was apparent that I could not get anybody to listen.”

Dr. Walker: “At this point did anyone else [i.e., engineers] speak up in favor of the launch?”

Mr. Boisjoly: “No, sir. No one said anything, in my recollection. Nobody said a word. It was then being discussed amongst the management folks. After Arnie and I had our last say, Mr. Mason said we have to make a management decision. He turned to Bob Lund and asked him to take off his engineering hat and put on his management hat. From this point on, management formulated the points to base their decision on. There was never one comment in favor, as I have said, of launching by any engineer or other nonmanagement person in the room before or after the caucus. I was not even asked to participate in giving any input to the final decision charts.

“I went back on the net with the final charts or final chart, which was the rationale for launching, and that was presented by Mr. Kilminster. It was handwritten on a notepad, and he read from that notepad. I did not agree with some of the statements that were being made to support the decision. I was never asked nor polled, and it was clearly a management decision from that point.

“I must emphasize, I had my say, and I never take any management right to take the input of an engineer and then make a decision based upon that input, and I truly believe that. I have worked at a lot of companies, and that has been done from time to time, and I truly believe that, and so there was no point in me doing anything any further [other] than [what] I had already attempted to do.

“I did not see the final version of the chart until the next day. I just heard it read. I left the room feeling badly defeated, but I felt I really did all I could to stop the launch. I felt personally that management was under a lot of pressure to launch, and they made a very tough decision, but I didn’t agree with it.

“One of my colleagues who was in the meeting summed it up best. This was a meeting where the determination was to launch, and it was up to us to prove beyond a shadow of a doubt that it was not safe to do so. This is in total reverse to what the position usually is in a preflight conversation or a Flight Readiness Review. It is usually exactly opposite that.”

Dr. Walker: “Do you know the source of the pressure on management that you alluded to?”